Niconico stream rankings are one of the most frequently talked-about stats when it comes to early-season indicators of how a show might be performing. It’s been somewhat frustrating to me that they seem to get brought up at the beginning of every season, but how accurate their predictions were has never really been revisited. By comparing their basic effectiveness with the eventual disk sales results, hopefully we can get a little more context for how those numbers should be interpreted.

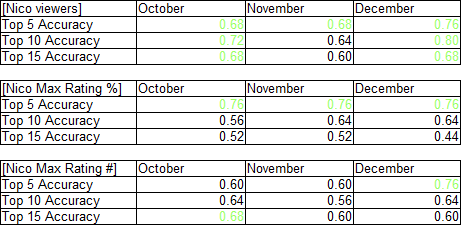

Nico ranks come with a couple of numbers attached, so I’m looking a couple of them to see which ones worked best. I use 3 metrics: the number of viewers at the end of the episode stream, the percentage of viewers who gave the episode a 1 (the max score), and the product of those first two (i.e. the number of users who gave the episode a max score). All 3 of these metrics were averaged over episodes 1-4 (October), episodes 5-8 (November), and episodes 9-10,11, 12, 13 (December). I then determined how accurate guesses based solely on these method would be if the top 5, 10, and 15 items on the list were assumed to have their first volume sell over 4k disks.

It should be noted that only 25 of the 38 Fall shows I’m using v1 data for have available Nico rank data, 9 of which had v1s sell over 4000 disks. In the interest of not polluting the sample, I’m just checking the accuracy of the rankings within the sample, excluding the other 13. This does leave the sample with a different over/under ratio than the full seasonal sample (36% instead of 34%).

Anyway, here’s how the various methods performed on the Fall data (detailed data here, breakdown doc here). Numbers for the ones that beat the .66 accuracy afforded by the best null hypothesis are shown in green:

Total number of viewers arguably performed the best, besting the null hypothesis outright in October and December. Max rating % did very well when only taking the top 5, but fairly poorly otherwise (the top 15 models barely beat out a coin flip model). Max rating number was fairly spotty, except as a broad-strokes indicator in October and a top-tier indicator in December.

Total number of viewers arguably performed the best, besting the null hypothesis outright in October and December. Max rating % did very well when only taking the top 5, but fairly poorly otherwise (the top 15 models barely beat out a coin flip model). Max rating number was fairly spotty, except as a broad-strokes indicator in October and a top-tier indicator in December.

This is data taken from a fairly small sample, but these numbers do suggest that the top-tier of the rankings and the total # of stream viewers are at least better than random chance at picking which shows will succeed, something that couldn’t be said for most Torne DVR lists. How impressive that is depends on how well other indicators do and how well it holds up to an extension of the sample size.

Pingback: Fun With Numbers: Myanimelist Stats Predicting the Fall 2013 Disk Over/Unders | Animetics

Pingback: Fun With Numbers: Nico Stream Rankings Predicting Summer 2013 Disk Over/Unders | Animetics

Pingback: Fun With Numbers: Print Sales Bumps and Poking at the Demand Curve | Animetics