Quick recap: I’m taking a look at various no-cost indicators of popularity for anime and their related goods. First, I’m checking how well they correspond to disk sales by checking whether different applications of that statistic beat the null “every v1 will sell less than 4000 disks” accuracy criterion for a given season (66% for Fall, 59% for Summer). Later I’ll check how well these indicators corresponded to boosts in manga/LN source popularity (for works that were originally LN/manga), to contrast their predictive abilities at both high-cost and low-cost levels of interest.

Nico stream rankings are often discussed in the context of early-season indicators of disk sales, and my analysis of the Fall 2013 shows for which those numbers were available did show a bunch of different versions of the data proving more accurate than the null hypothesis. Adding another season should help control for any peculiarities with the Fall season.

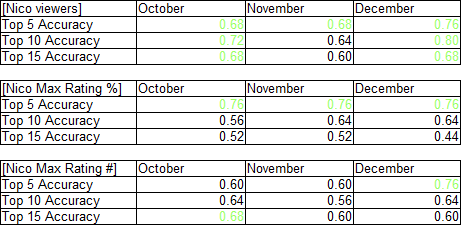

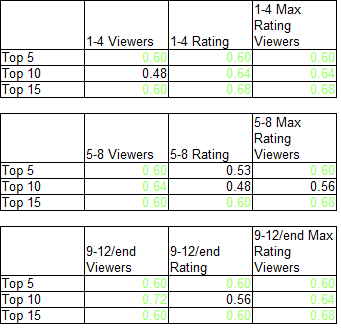

The accuracy rates for the top 5/10/15 models using only the 25 series Summer series with Nico stream data available, split into 3 averaged samples corresponding to the beginning, middle, and end (labeled 9-12, but occasionally 9-13 or 9-10) of the series. The individual rank data was compiled here, and the sorted version can be found here. The bare-bones summary of the results is below (green means an accuracy greater than that of the null hypothesis).

Note: the chart format groups by period, rather than by metric, as the Fall chart did.

That’s a lot of green, corresponding to a fairly useful indicator. While there are notably some top-tier performances that failed to lead to disk sales (Watamote was top 5 in half the metrics, top 10 in all of them), the general results point to an indicator that can be fairly discussed early on as a broad-basis indicator of disk-buyer popularity.

That’s a lot of green, corresponding to a fairly useful indicator. While there are notably some top-tier performances that failed to lead to disk sales (Watamote was top 5 in half the metrics, top 10 in all of them), the general results point to an indicator that can be fairly discussed early on as a broad-basis indicator of disk-buyer popularity.

Peculiarly, the max rating percentage becomes a less accurate indicator for the later periods. This appears to be due to the rise of specific shows (Genshiken Nidaime, Rozen Maiden’s reboot) that lost viewers while maintaining the number of viewers giving the max scores; most likely, many of the people who had middling opinions of them checked out after the first month, leading to somewhat inflated scores.