Edit: Link to source document fixed.

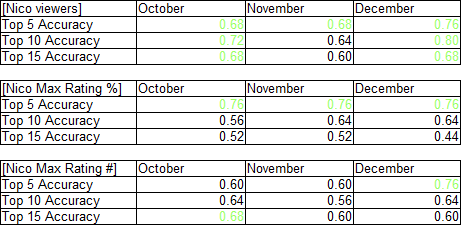

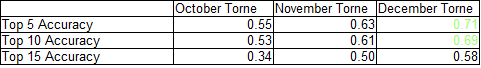

Like those of any other free-access site, or any one set of numbers, statistics from myanimelist should be utilized with caution. But that doesn’t mean they shouldn’t or can’t be used. In this article, I’m looking the current rank and popularity of 38 shows from the Fall 2013 on myanimelist to determine how they stack up against other methods of doing the same. As before, note that any accuracy below the best null hypothesis of .66 is fairly insignificant.

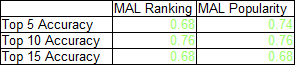

By the by, the myanimelist stats used in this piece were taken in late April, 2014. Odds are they’ve changed a bit since the outset of the season, though shifts within the season tend not to be particularly cataclysmic. Most can probably be viewed as in a similar boat to December Torne/eps 9-12 Nico ranks, in that they’re made when the audience has more information about the show (i.e. has seen more episodes). I used both rank/score and popularity/total number of users to order the shows, and then checked how many v1 sales using the top 5/10/15 shows on those lists correctly pegs as over or under 4000 disks. Data can be found on this doc, and the results are below (indicators greater than the null hypothesis in green):

Turns out, both indicators do about the same, both beating out the null hypothesis for all 3 list sizes. Out of the metrics I’ve examined so far, that’s the best overall performance. Of course, keep in mind that this is a fairly small sample, and there are other ways (notably source material sales) that these indicators may be telling. It’s still fairly early in the project to say much, beyond the fact that mal stats are at least a little more indicative than random chance.

Turns out, both indicators do about the same, both beating out the null hypothesis for all 3 list sizes. Out of the metrics I’ve examined so far, that’s the best overall performance. Of course, keep in mind that this is a fairly small sample, and there are other ways (notably source material sales) that these indicators may be telling. It’s still fairly early in the project to say much, beyond the fact that mal stats are at least a little more indicative than random chance.